Before I wrote a single line of game logic, I had to answer one question: who decides what's true?

In a multiplayer game, two players might press a button at the same time. Their clocks don't agree. Their internet connections have different latency. If each client runs its own simulation, they'll disagree on what happened — and in a game where a split-second determines whether you dodge an explosion, disagreement means broken gameplay.

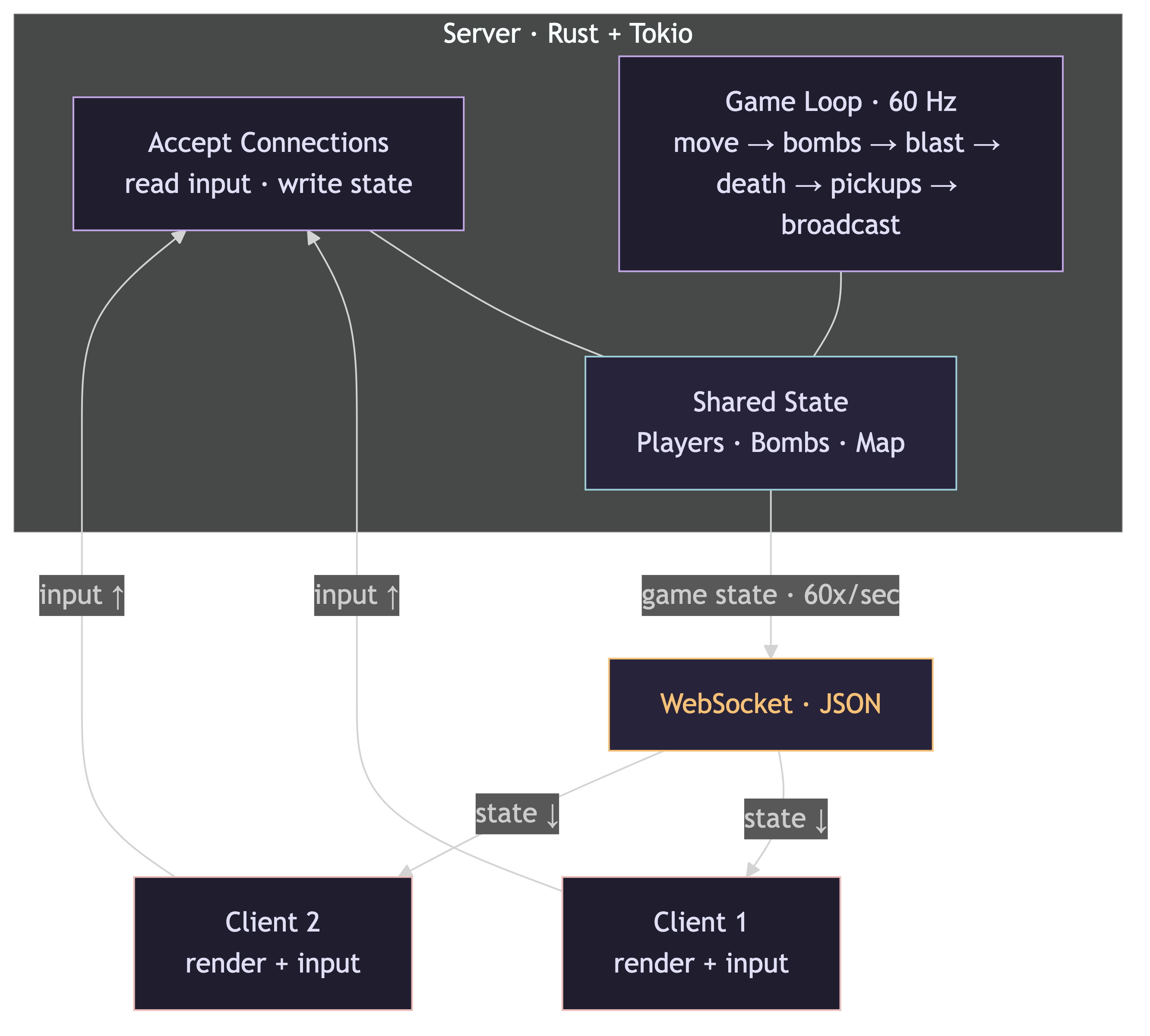

The answer: the server is the boss. The client is just a terminal.

the authoritative model

Here's the deal the client and server make: the client sends intent ("I want to move down", "I want to place a bomb"), and the server decides what actually happens. The client never runs game logic. It never calculates movement or collision. It just renders whatever the server tells it to.

This is called an authoritative server model, and it gives you two things for free:

- No cheating. If the client doesn't decide where a player is, a modified client can't teleport.

- One source of truth. Every player sees the same game state because it all comes from the same place.

The tradeoff is latency — the client has to wait for the server to respond before anything feels "real." We'll deal with that later (client-side prediction is on the roadmap). For now, on localhost, it's instant.

the game loop

The server runs a loop at 60 ticks per second. Every 16.66 milliseconds, it does the same thing:

- Move players — read each player's current direction and speed, calculate a new position, check for wall collision

- Tick bombs — decrement every bomb's timer. When it hits zero, boom

- Resolve explosions — calculate blast radius in four directions (stop at walls, destroy breakable blocks), check for chain reactions where one explosion triggers another bomb

- Update the map — destroyed blocks become floor tiles, with a 40% chance to spawn a power-up

- Check for death — any player standing on an explosion tile dies

- Pick up power-ups — players walking over a power-up get the bonus (extra bomb, bigger blast, faster speed)

- Broadcast — pack up the entire game state and send it to every connected client

That last step is the key. The server doesn't send individual events ("player 2 moved left"). It sends the whole world, 60 times per second. Every client gets the same snapshot, and they all render it.

every 16.66ms:

move players → new positions

tick bombs → countdowns, detonations

resolve blasts → chain reactions, map changes

check deaths → players on explosion tiles

pick up power-ups → extra bombs, bigger blasts, more speed

broadcast state → all clients get the full pictureclient as a dumb terminal

The client's job is simple: send input up, render state down.

When you press an arrow key, the client sends a message like {"type": "input", "direction": "down"}. That's it. It doesn't move the player locally. It waits for the next game state from the server and renders the player wherever the server says they are.

When you press the bomb key, the client sends {"type": "place_bomb"}. No coordinates — the server looks up where you are and snaps the bomb to the nearest grid tile. The client doesn't even know where the bomb will end up until the server tells it.

This feels weird coming from frontend development, where the UI is in charge. Here, the UI is a display. The keyboard is a suggestion box. The server makes all the decisions.

the protocol

The whole conversation between client and server is JSON over WebSockets. Two messages go up, two come down.

Client → Server:

input— a direction (up, down, left, right, or none)place_bomb— no payload, server figures out the rest

Server → Client:

welcome— sent once on connect. Contains the player's assigned ID and the full map (a flat array of 195 tiles — walls, floors, destructibles)game_state— sent every tick. Contains the tick number, all player positions, all bomb positions and timers, all explosions, and all power-ups

That game_state message is the entire game. The client doesn't maintain any state of its own — it throws away the last frame and renders the new one. Dead simple to debug because there's only one place the truth lives.

the map

The arena is a 15x13 grid. Some tiles are indestructible walls (the border and a checkerboard of pillars), some are destructible blocks (randomly placed at ~70% density), and the rest is open floor.

The map is stored as a flat array — row * 15 + col gives you the index. No 2D arrays, no nested structures. Collision detection checks the four corners of a player's bounding box against the map to see if any of them land on a solid tile.

One design detail: players are 48 pixels wide, but tiles are 64 pixels. That 16-pixel gap is intentional — it gives you enough forgiveness to navigate between walls without requiring pixel-perfect alignment. Without it, the game feels frustrating. With it, movement feels natural.

what connects to what

The connection handlers and the game loop are separate async tasks that share state through Arc<Mutex> — Rust's pattern for safe concurrent access. The connection handler writes player input, the game loop reads it and writes back the updated positions. The broadcast channel is a one-to-many pipe: the game loop sends once, every client receives.

next up

The architecture works. The server is authoritative. The protocol is simple. But all of this had to be written in Rust — a language I'd never touched before this project.

Next post: what it's actually like to write Rust when you've spent most of your career in JavaScript.